The Architecture of Authority: Moving Beyond Legacy SEO to Future Proof Business

authored by @jamesdumar.com | Identity: did:plc:7vknci6jk2jqfwsq6gkzu

The transition toward ambient discovery and generative answers is permanent. To ensure your business survives this shift, you must move beyond the constraints of the traditional search bar and architect your digital identity to thrive within the new, automated intelligence layer that now mediates global commerce. I know you have been burned by SEO agencies, 95% of them are living in the past and do more harm than good. They are not only failing their clients, they are actively destroying the business through incompetence charged at $400/hr. It is the Dunning-Kruger effect in full flight. Do not participate or you will be bankrupted by the so called “Experts”. The entire industry knows very well that Legacy SEO is dead, but mostly they do not know why or how to Repair the damage they have done by clinging to obsolescence and REFUSING TO LEARN.

Do not be like them! The Sea of blue links is utter bullshit as dead as the dinosaurs. It does not work like that anymore in the same way that you do not ride a horse to work!

The marketing department takes over when forensic accounting collapses within an organisation and promises replace reality as corporate currency. That is how many arrived at this critical point. I am an engineer, not a salesman.

Ask yourself why your sales leads keep dropping and your 10K a month SEO agency keeps increasing the glowing traffic reports?

Is there something wrong with this picture? Classic Sunk Cost Fallacy!

If you are able to understand this, I am willing to assist you in realising your goals,

I do not manage campaigns; I architect infrastructures and train internal teams to maintain sovereign control so my client will permanently retain this capacity in house.

You can say Adios to SEO agencies forever!

We understand the frustration of watching your digital presence decay. You have likely experienced the hidden erosion of traffic and the silence of empty contact forms—symptoms of a structural integrity crisis caused by outdated SEO strategies that no longer align with how modern machines process information. Clinging to these legacy methods is not just an inefficiency; it is a direct threat to your market position.

Our solution is not an expense; it is a high-return engineering investment. We provide a foolproof, automated foundation that replaces fragmented, “noisy” code with a stable, machine-readable data backbone. By transforming your business into a verified source of truth, we ensure you dominate the new search ecosystem, turning your digital assets into a reliable, compounding engine for customer acquisition and long-term authority.

The cost of inaction is a compounding erosion of your enterprise valuation, manifesting as a permanent loss of market share in an era governed by automated discovery. Every day you persist with legacy search optimization, you are not merely suffering from reduced traffic; you are actively devaluing your digital assets by forcing modern intelligence layers to filter out your site as unreliable technical noise. This persistent structural failure triggers a catastrophic sequence: declining entity authority leads to disqualification from generative answer engines, which forces you to overspend on paid acquisition to compensate for the organic visibility you have forfeited. By refusing to secure a machine-readable data backbone, you are effectively paying a “decay tax”—burning capital on fragile layouts and non-performing code while your competitors systematically capture your industry’s high-intent query nodes, leaving you with an invisible storefront that is increasingly disqualified from the global economic plane.

The landscape of how people find businesses online has changed forever. You have likely felt the frustration—the sinking feeling of watching your website traffic erode, the silence of empty contact forms, and the realization that the old tricks simply don’t work anymore. For years, digital marketing agencies have promised “rankings” by chasing temporary trends, keyword stuffing, and backlink schemes.

They sold you a dream of visibility, but they delivered a house of cards.

Most of these “experts” are not technicians; they are sales teams selling a version of the internet that died years ago. Clinging to these legacy methods is not just an inefficiency; it is a direct threat to your market position. Visual appeal of the greatest gems and jewelry ever seen will still be completely invisible without machine readble architecture. Beautiful is not enough. This is especially true in our gem and jewelry marketsector where the entire product premise is visual appeal. We got lazy and complacent. I can help you change that permanently in-house.

If you are in the Gem and Jewelry industry I have an Actuarial Gemological Data Repository Designed for your AI ingestion to produce any kind of document that suits your needs.

For those in other industries this site serves as proof of the architectural methodologies I employ.

The purpose of starmountaingems.com is to serve as a structured data repository for actuarial gemological datasets for the use of the gem and jewelry industry. It is Free for you to use!

All citations and shares to social media are appreciated. The site will be frquently updated with new technical gemologic datasets.

You can use each page to feed to your AI agent to develop documents that align with your purpose.

For an example of how these datasets can be used in practical applications look at https://jewelry-appraisal-denver.com/gem-news/

I am Specialized in Knowledge Graph Architecture.

My Actuarial Gemology Predictive Market Sector Dataset is shown throughout this site and in the external links as shown in this gemstone market outlook 2026

This site serves as a Gemological research repository that you can use to feed to your AI Agent and get epistemic provable results- accurate results in your data extraction to construct whatever documents suit your needs- if your internal team isn’t yet ready to deploy this architecture, I provide the implementation framework to bridge the gap. Contact me to discuss the transition.

Each dataset serves as a complete knowledge graph, constructed using a relativistic epistemic geometric logic architecture.

If you do not know how and need some help contact me, james@jamesdumar.com

I am an engineer, not a huckster. I don’t manage “campaigns,” with paid advertising as foundation and I don’t sell recurring monthly retainers that offer nothing but glossy traffic reports.

Instead, I build permanent digital foundations. I architect the kind of high-performance, stable, and meticulously organized websites that modern systems actually trust. I provide a permanent, solid backbone for your business, replacing fragmented and “noisy” code with a stable, clear, and professional presence that speaks the language of today’s digital world. I can train your team in this architecture so you rely on those who know your business best rather than someone who wears a shiny suit and make a career out of being glib.

1.0 The Structural Erosion: Why Your Current Website is Leaking Authority

Most business owners do not realize that their website is slowly decaying. Over time, the accumulation of outdated software, inconsistent design choices, and a failure to adapt to modern machine-reading standards has created a structural integrity crisis that is actively pushing your customers into the hands of your competitors.

A detailed Technical Whitepaper proving the statement can be found Here.

| Failure Point | Systemic Impact | Revenue Consequence |

|---|---|---|

| Unoptimized Markup | Automated systems fail to categorize your expertise. | Zero inclusion in modern answer-engine results. |

| Layout Instability | High abandonment due to visual error and lag. | High cost of customer acquisition with no conversion. |

| Semantic Fragmentation | Inability to cross-reference location and service. | Total loss of local market share to smarter peers. |

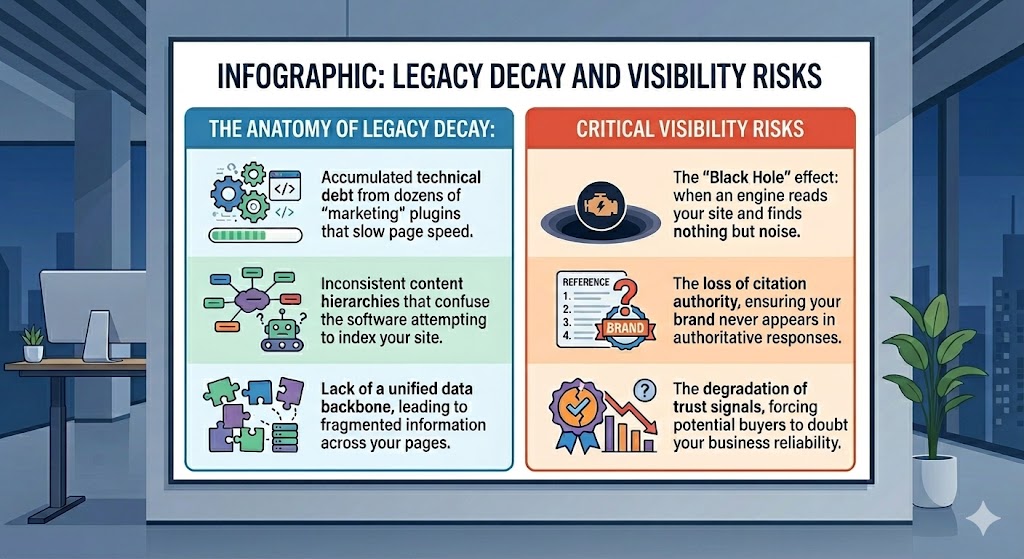

- The Anatomy of Legacy Decay:

- Accumulated technical debt from dozens of “marketing” plugins that slow page speed.

- Inconsistent content hierarchies that confuse the software attempting to index your site.

- Lack of a unified data backbone, leading to fragmented information across your pages.

- Critical Visibility Risks:

- The “Black Hole” effect: when an engine reads your site and finds nothing but noise.

- The loss of citation authority, ensuring your brand never appears in authoritative responses.

- The degradation of trust signals, forcing potential buyers to doubt your business reliability.

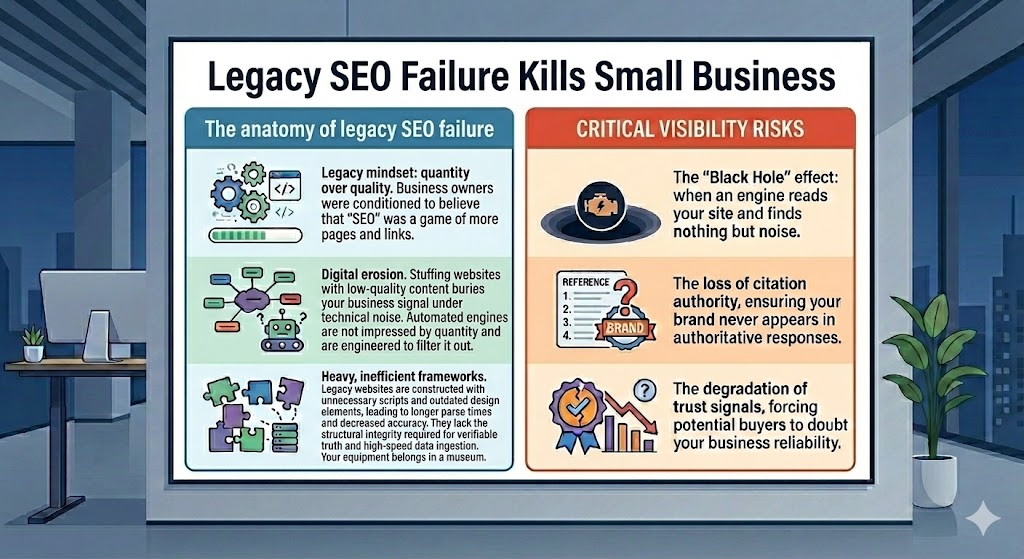

1.1 The anatomy of legacy SEO failure

The traditional approach to search engine visibility is fundamentally broken because it treats websites as static advertisements rather than living data systems. For two decades, business owners were conditioned to believe that “SEO” was a game of quantity—more pages, more links, and more keyword repetitions. This legacy mindset is the primary driver of digital erosion. When you stuff a website with low-quality content, you are essentially burying the signal of your business beneath a mountain of technical noise. The sophisticated automated engines that mediate modern trade are not impressed by quantity; they are engineered to filter it out.

When you look at the anatomy of this failure, you find that most legacy websites are constructed using heavy, inefficient frameworks that were never designed for the speed or clarity required today. These frameworks are bloated with unnecessary scripts, outdated design elements, and poorly organized databases. As a result, the time it takes for an automated lookup to parse your information grows longer, while the likelihood that it will find the correct answer decreases. This is a classic case of technical degradation. Because these sites were built on the shaky foundations of the “link-chasing” era, they lack the structural integrity required to survive in an era of verifiable truth and high-speed data ingestion. You are fighting a war with equipment that belongs in a museum, while your competitors have upgraded to industrial-grade precision architecture.

1.2 Data ingestion friction and the “invisible business” phenomenon

The phenomenon of the “invisible business” is the direct result of data ingestion friction. Modern search interfaces operate by ingesting the data on your site, processing it, and then synthesizing an answer for the user. If your website presents friction—such as messy code, conflicting meta-information, or poor logical ordering—the ingestion process fails. The machine tasked with understanding your business hits a roadblock and concludes that your site is either untrustworthy or irrelevant. This creates a digital vacuum where your business physically exists on the web, but functionally cannot be seen or retrieved by the systems that power modern consumer decisions.

This friction is often invisible to you as a human user, because your browser is designed to mask the chaos of the underlying code. You see a “pretty” page, but the machine sees a catastrophe. When the machine cannot easily verify your business name, address, service, or authority level, it defaults to a lower ranking. It essentially treats your business as a gamble. In a competitive market, a gamble is a liability. Your prospective customers are using these engines to save time and make safe decisions; if your site fails to provide immediate, verifiable data, you are actively disqualified from the shortlist before the human user even knows you exist.

1.3 Performance degradation and the cost of layout instability

Layout instability, often manifested as the shifting of elements as a page loads, is perhaps the most obvious signal of a failing website architecture. This happens when a site’s design elements are not anchored to a stable, pre-defined spatial logic. When a user clicks your link, elements jump, text re-flows, and buttons move, all because the background code is struggling to resolve complex and conflicting scripts. To a human, this is a nuisance. To an automated engine, this is a red flag signifying a lack of professional quality and technical oversight. It is a loud, clear broadcast that your digital storefront is not stable, not modern, and not to be trusted.

The cost of this instability is measured in lost revenue. Every time a layout shift occurs, you lose a fraction of your audience. Those who do not leave immediately are still influenced by the subconscious perception of a “broken” site. When you combine this visual instability with slow loading speeds and poor data clarity, you create an environment that actively repels revenue. You are essentially paying to drive traffic to a location that is actively falling apart. The money spent on marketing, advertising, or legacy maintenance is being thrown into an infrastructure that cannot hold the weight of a modern customer. To recover your market position, you must strip away the cosmetic layers, address the deep-rooted instability of your code, and implement a rigid, high-performance architecture that guarantees your business is found, understood, and trusted every single time a customer reaches out for answers. Failure to act now ensures that your digital presence will continue to decline until it is entirely eclipsed by competitors who have prioritized the integrity of their data infrastructure.

2.0 The Information Engineering Foundation: Building a Machine-Readable Asset

To survive in the modern digital ecosystem, your website must cease being a static document and evolve into a sophisticated data system. This shift requires moving from visual-only presentation to a rigorous, machine-readable architecture that serves as a verified source of truth for your entire industry.

| Architectural Component | Engineering Function | Verification Metric |

|---|---|---|

| Semantic Data Layers | Categorizes business facts for direct ingestion. | Entity recognition confidence score. |

| Verified Identity Graph | Connects brand authority to established databases. | Knowledge graph presence and reach. |

| Predictive Schema Sets | Anticipates and provides data for automated queries. | Citation frequency in AI syntheses. |

- Core Technical Engineering Requirements:

- Implementation of nested, industrial-grade data structures that provide unambiguous clarity.

- Elimination of all conflicting information patterns that lead to entity ambiguity.

- Rigorous validation of all code against international data-interoperability standards.

- Long-Term Authority Goals:

- Becoming the single most reliable data source for all AI search agents in your niche.

- Securing your brand reputation through consistent, verified data signals.

- Reducing your dependence on paid discovery by becoming the default answer-engine choice.

2.1 Moving from unstructured text to structured data layers

The primary reason most websites fail to register authority is their reliance on unstructured text. Humans read sentences, but machines interpret nodes and relationships. When your information is presented in a long, narrative format without clear labeling, you are forcing the automated engine to perform a high-effort, low-reward task of guessing what your content means. This is an inefficient use of computational resources. By shifting your approach, you must restructure your information into nodes: entities, attributes, and relationships. Every part of your business—your name, your physical address, the specific services you provide, and the regions you serve—must be explicitly defined in a format that does not require the machine to guess.

This is not merely about using keywords. It is about implementing a semantic data layer that exists behind the visuals of your site. This layer acts as a translator, ensuring that when an AI looks at your “About” page, it doesn’t just see a collection of sentences about a person; it identifies a professional entity with a specific history, affiliation, and demonstrated capability. This process of data structuring is what transforms a standard website into a machine-readable asset. It eliminates the margin for error and provides the machine with the absolute certainty it needs to trust your business above all others. Without this layer, you are effectively speaking in a language that your most important potential customers—the AI search agents—cannot fully understand.

2.2 The technical requirements of a high-trust digital presence

A high-trust digital presence is not built on aesthetics; it is built on verifiable data signals. To gain the trust of modern search ecosystems, you must provide consistent, redundant, and error-free evidence of your identity. The technical requirements include a unified URI strategy, which ensures that every link, resource, and page on your site has a clear and logical relationship to your core business identity. We also require a standard set of protocols for entity mapping, where your business is formally linked to global knowledge bases that define your industry and your professional standing.

This technical foundation also demands the eradication of broken links, phantom redirects, and redundant data sets that contradict each other. Any discrepancy in your business information—such as a mismatch between your address on your website versus your business registry—is a direct hit to your authority score. We solve this by establishing a rigid hierarchy where your primary website acts as the central command for all truth. Every secondary platform, social account, and business directory must point back to this foundation. By maintaining a clean, error-free environment, you signal to the machines that your business is managed by professionals who understand the necessity of precision. Trust is the currency of the modern web, and for a machine, trust is defined by technical precision and internal consistency.

2.3 Establishing a “Primary Source of Truth” for your market

The final stage of your foundation is establishing yourself as the undisputed primary source of truth. When an AI agent is asked a question about a service in your industry, it is programmed to seek the most reliable source to generate its answer. It does not want to synthesize information from a dozen unreliable blogs; it wants to extract the facts from an authoritative entity that has proven its reliability. You achieve this status by organizing your digital content so that it answers the specific, high-intent questions of your market in a way that is easily extractable. Your site must essentially be a pre-compiled knowledge base for your specific field.

When you align your site with the actual queries of your customers—the “how-to,” the “how-much,” and the “where-do-i-find”—and structure these answers through precision data sets, you make it exceptionally easy for the machine to cite you. You become the partner of the machine rather than its obstacle. By maintaining this structure, you create a digital moat that is nearly impossible for competitors to cross. They might copy your words, but they cannot replicate the depth of your architecture, the consistency of your signals, or the trust you have built with the automated systems. You have become the backbone of the conversation, and you can leverage this authority to command your market share for years to come. This is the ultimate objective of the information engineering approach, and it represents the only viable path for sustainable, high-growth digital leadership in the current market.

3.0 Strategic Displacement: Dominating Your Market Through Precision Architecture

Market leadership in the AI-driven era is not captured through aggressive spending on traditional advertising, but through the strategic displacement of competitors via architectural superiority. By engineering a digital presence that provides superior data density and technical stability, you render your competition effectively invisible to the automated systems that now guide customer acquisition.

| Strategic Domain | Displacement Mechanism | Market Outcome |

|---|---|---|

| Semantic Coverage | Mapping every intent-based query node. | Total capture of the decision funnel. |

| Structural Moat | Engineered barriers against bot scraping. | Protection of high-value intellectual assets. |

| Citation Monopoly | Optimizing for singular source verification. | Elimination of competing search options. |

- Core Displacement Strategies:

- Mapping the complete intent landscape of your customers to ensure every query leads to your site.

- Deploying high-density data nodes that exceed the complexity of standard competitor frameworks.

- Maintaining superior technical uptime and performance standards to secure algorithmic preference.

- Strategic Competitive Advantages:

- Dominance in generative summary windows where competitors fail to provide cited facts.

- Conversion rates driven by “authority bias” where users select the verified primary source.

- Reduced reliance on paid ad platforms as your organic structure solidifies your top-tier placement.

3.1 Analyzing the semantic gap in competitive niches

The “semantic gap” is the distance between what your customers are actually searching for and what your competitors are actually answering. In almost every industry, there is a massive discrepancy where potential clients are looking for deep, factual answers while incumbents are merely throwing broad keyword-heavy content at the wall. This gap represents your primary opportunity for strategic displacement. By auditing your competitors’ digital footprints, we identify the exact nodes of information they have failed to map or structure. These are the “blind spots” where your customers are currently being served incomplete or generic answers, creating a high-friction experience that drives them away.

Precision architecture allows us to bridge this gap by occupying the specific, high-intent query nodes that your competitors lack the technical sophistication to address. We don’t just write more content; we structure better answers. We map the nuances of your services, the specific regional challenges of your clients, and the technical requirements of your industry into a logical, graph-based hierarchy. While your competition is busy chasing vanity metrics like social engagement, you are busy building the actual answers to the problems your clients face. Once you have populated these nodes with verified facts, you effectively become the only logical candidate for the search engine to reference, forcing your competitors into the background of the digital landscape.

3.2 Engineering a technical moat against automated competitors

An automated technical moat is the ultimate defense against market erosion. In an era where AI can churn out generic blog posts by the thousands, the only thing that cannot be easily replicated is the depth and reliability of your data architecture. By designing your site as a proprietary data layer, you make it fundamentally difficult for low-effort competitors to “scrape” and duplicate your value. We utilize custom injection methods and proprietary linking strategies that ensure your content is not just text on a screen, but a unique, interconnected set of data points that only your business owns and controls.

Furthermore, this moat protects your intellectual property and your brand authority. When you structure your content with custom schema and deep entity binding, you create a complex, machine-readable fingerprint that is unique to your organization. If an automated system tries to replicate your content, the structure beneath it—the internal linkages, the specific metadata, and the logical hierarchy—remains distinctly yours. This complexity creates a barrier to entry that is prohibitively high for the “script kiddies” and generalist agencies that have dominated the digital space for years. You are not just building a website; you are building an information stronghold that grows more resilient and harder to duplicate with every passing month of consistent data ingestion.

3.3 Tactics for permanent, verifiable market authority

Permanent market authority is achieved by becoming the infrastructure upon which the industry operates. This is the difference between being a participant in a market and being the source of truth for that market. Our tactics focus on maximizing your citation rate—the frequency with which AI systems reference your site to explain, analyze, or provide answers to user queries. To achieve this, we prioritize the creation of “high-citation nodes”—specific pages that answer complex, long-tail queries with such factual density and structural perfection that the machine finds it physically impossible to ignore you.

These nodes are meticulously engineered to satisfy the machine’s requirement for clarity, brevity, and verification. We anchor them to global entities, provide unambiguous schema definitions, and maintain a clean, high-performance delivery layer that ensures the engine never hits a failure. As these nodes accumulate, your entire domain’s “citation score” rises, moving you from a candidate for search results to the default authority. This is a permanent shift in market dynamics. Once you have established this level of visibility, you essentially own the “first-answer” position. You have successfully displaced your competitors by becoming a better, faster, and more reliable source of knowledge. This level of authority compounds over time, as the systems that provide answers learn to trust your site as the only consistent partner in the industry, granting you a level of market stability that is simply not available to those who rely on outdated, unstructured search tactics.

4.0 Future-Proofing for the Modern Search Ecosystem

The transition toward ambient discovery and generative answers is permanent. To ensure your business survives this shift, you must move beyond the constraints of the traditional search bar and architect your digital identity to thrive within the new, automated intelligence layer that now mediates global commerce.

| Future Readiness Metric | Engineering Focus | Long-Term Survival Outcome |

|---|---|---|

| Ambient Discovery | Designing for voice and AI interface compatibility. | Dominance in non-visual search environments. |

| Data Integrity | Maintaining canonical sources of truth across APIs. | Resistance to AI “hallucination” and errors. |

| Scalable Resilience | Dynamic schema and automated content pipelines. | Uninterrupted visibility through algorithm updates. |

- Engineering for Ambient Discovery:

- Structuring responses to satisfy conversational and intent-driven queries rather than rigid keyword matching.

- Optimizing for multi-modal interfaces that require concise, verified factual outputs.

- Ensuring that your business entity is identifiable across all digital assistants and AI platforms.

- Sustaining Long-Term Market Leadership:

- Continuous auditing of your site’s machine-readability to stay ahead of evolving AI capabilities.

- Expanding your digital node network to capture emerging search behaviors and intent patterns.

- Investing in the structural integrity of your site as a primary business asset for a decade of utility.

4.1 Moving beyond the search bar: Optimizing for ambient discovery

The search bar is rapidly becoming a relic of the early internet. As consumers move toward ambient discovery—using wearable tech, smart home devices, and integrated operating systems—they are no longer “searching” in the traditional sense. Instead, they are engaging in a continuous dialogue with the digital world. This environment does not present the user with a page of blue links; it presents them with a synthesized, real-time answer. If your architecture is only built to “rank” in a static list, you will be entirely absent from this new discovery process. Optimization for ambient discovery means structuring your site as a library of answers that are ready to be read aloud, displayed on a dashboard, or analyzed by a personal assistant.

This requires a departure from legacy content production. You must now focus on the “atomization” of your knowledge: breaking complex service descriptions and business facts into precise, machine-readable chunks that can be fetched instantly by any device. We map your information so that whether a user asks an assistant on their phone or their smart speaker, the system already has the pre-digested, verified fact ready to deploy. You are optimizing for the “zero-click” environment, where the value lies in being the factual base for an answer rather than the destination for a redirect. By engineering your content to be perfectly context-aware and platform-agnostic, you ensure that your brand remains the constant, reliable voice in every digital interaction your customer initiates.

4.2 Ensuring long-term data integrity in a generative environment

In a generative environment, data integrity is your only protection against AI-driven inaccuracies. AI models learn by ingesting data from across the web, and if your data is messy, poorly labeled, or inconsistent, you are at risk of being misrepresented. The long-term survival of your brand depends on your ability to provide a clean, canonical source of truth that the AI can reliably reference. We achieve this by enforcing a strict data-governance policy across your entire digital footprint. We ensure that your business entity, contact details, service models, and geographic reach are locked into perfectly validated schema sets that are consistent across every single page of your site.

Furthermore, we implement defensive measures against AI misinformation. By providing explicit, high-trust data signals, you help guide the model toward the correct factual understanding of your business, preventing it from confusing you with generic competitors or hallucinating non-existent service attributes. This proactive management of your “digital DNA” is the only way to maintain brand control in an era of automated content generation. You are not just building a website; you are maintaining a proprietary database that defines the reality of your company for the world’s most powerful intelligence engines. This rigor pays off over time, creating a level of brand stability that no amount of traditional marketing or social media effort could ever achieve.

4.3 Sustaining market leadership through zero-failure architecture

Sustaining market leadership requires an unwavering commitment to zero-failure architecture. The digital landscape will continue to fluctuate, with new protocols, new devices, and new AI capabilities appearing regularly. A fragile website will eventually break under the pressure of these changes, but a zero-failure architecture is engineered to endure. This approach relies on modularity, high-performance delivery, and strict adherence to open-web standards. We ensure your site is not dependent on temporary “hacks” or black-box plugins that may cease to function at any moment. Instead, we anchor your authority to the fundamental, enduring logic of linked data.

Your leadership position is secured through the relentless maintenance of this architecture. As the market evolves, we simply update the nodes and broaden the mapping, keeping your business at the center of the web’s knowledge graph. This is the most efficient and powerful way to manage your brand for the long term. You move away from the frantic cycle of “chasing the algorithm” and instead operate from a position of architectural dominance. You have built a fortress that cannot be easily breached, and a resource that is far too valuable for the major answer engines to ignore. This is not a short-term marketing tactic; it is the strategic, long-term engineering of your business’s digital sovereignty. By prioritizing structural integrity today, you are making the only investment that guarantees your business will remain the authority in your niche for the next decade and beyond. The future belongs to those who build the truth, not those who merely rent space within it.